I’ve always liked rucks.

I’m a firm believer that one of the best things a team can do for its marketability is having a really tall guy who stands out in looks and play. My kids are currently Max Gawn supporters first and Melbourne supporters second.

As a Melbourne supporter approaching 40 I feel like I’ve seen my fair share of good rucks and then some. Of the 34 All Australian teams named since 1991, Melbourne has provided the first choice ruck eight times, plus another 3 inclusions on the interchange bench (Gawn 5+2, Jamar 0+1, White 1, Stynes 2).

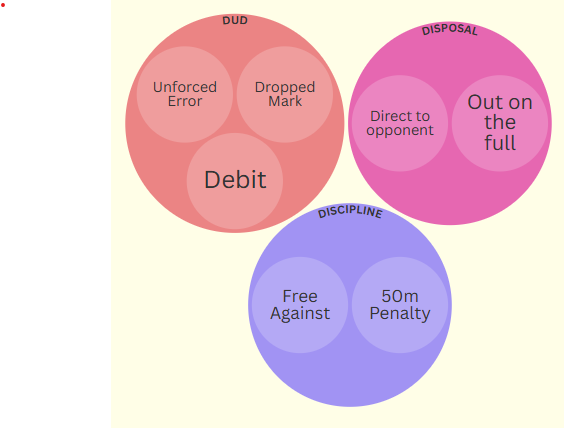

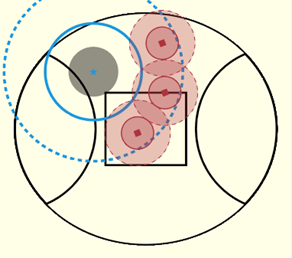

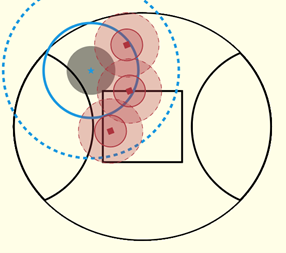

While we’ve had stats on hit outs and hit outs to advantage for a while, there’s a lack of understanding of how much these impact the game. Let’s look at the potential outcomes of a ruck contest and see where the most value for a team is.

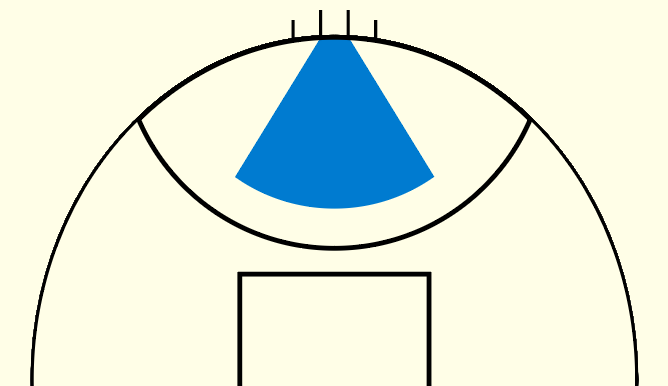

As you’d expect, getting a free from the ruck contest is by far the best outcome, followed by hit outs to advantage. Ruck hard ball gets also put you in a good position.

If we look at hitouts overall though, even when including hit outs to advantage as part of that, they barely move the needle. A quarter of them don’t lead to a clearance at all, and teams are almost as likely to concede a clearance or scoring opportunity after winning a hitout as they are to generate one.

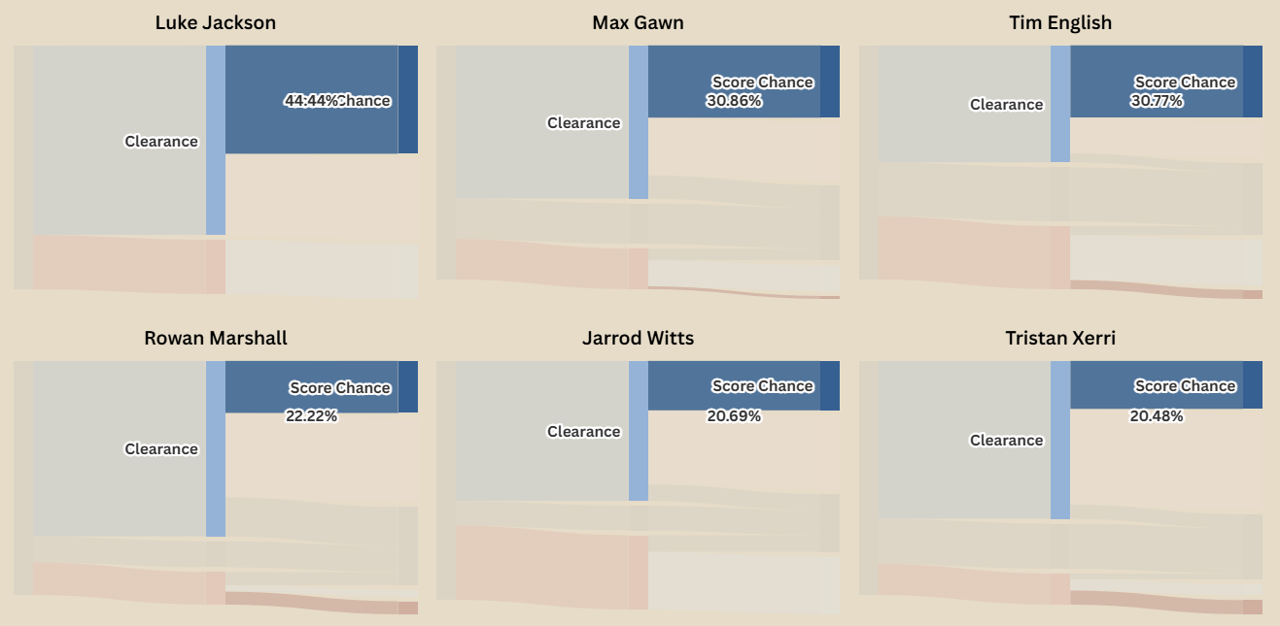

Another consideration is that two Ruck Hard Ball Gets aren’t necessarily the same. Let’s break down some of these outcomes by the top 20 rucks (by hitouts recorded in 2025).

(Selecting one of the flows will highlight that flow across all charts, allowing for easier comparison)

In terms of getting the ball moving, Oscar McInerney and Brodie Grundy are kings – 85% and 80% of their RHBGs resulting in a clearance for their team. For the next step along, Luke Jackson generates a scoring chance from 44% of RHBGs, well ahead of the next best in Gawn and English, both sitting at 30%.

43% of Kieran Briggs’ RHBGs end up with a clearance going the wrong way, while Sean Darcy is a rock – 25% of his result in the ball not clearing the stoppage area.

Turning to Hitouts To Advantage, Jarrod Witts leads the league in seeing HTA turn to clearances with 83% ahead of Darcy Fort and Matt Flynn on 80%. 30% of Jordon Sweet’s HTAs result in a scoring chance, but he also has the highest number of HTAs turn into an opponent scoring chance at 9.2%. This highlights that these figures can be heavily influenced by the supporting midfielders. Port are electric when they’re on, but can lack some defensive accountability with the league’s worst opposition score from stoppages.

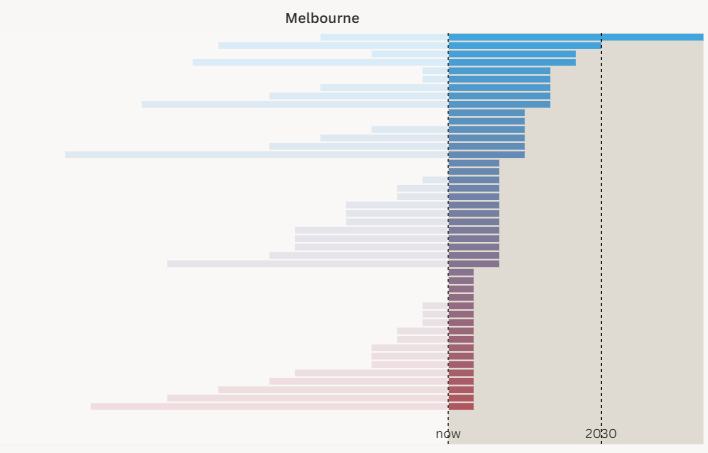

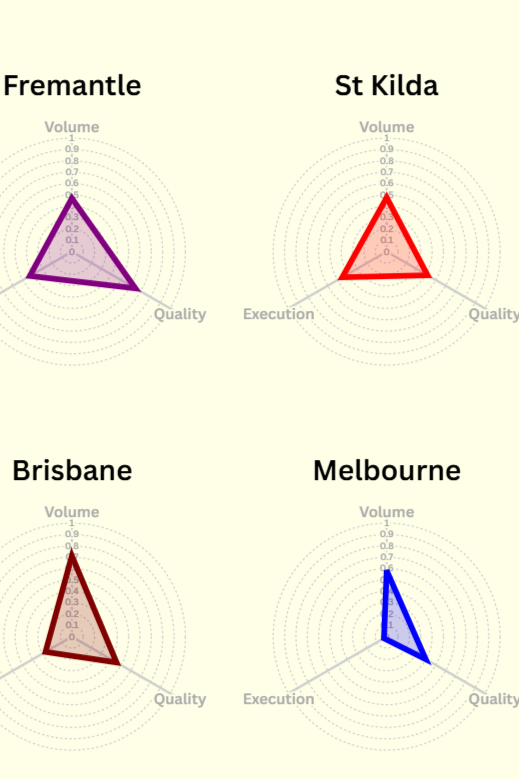

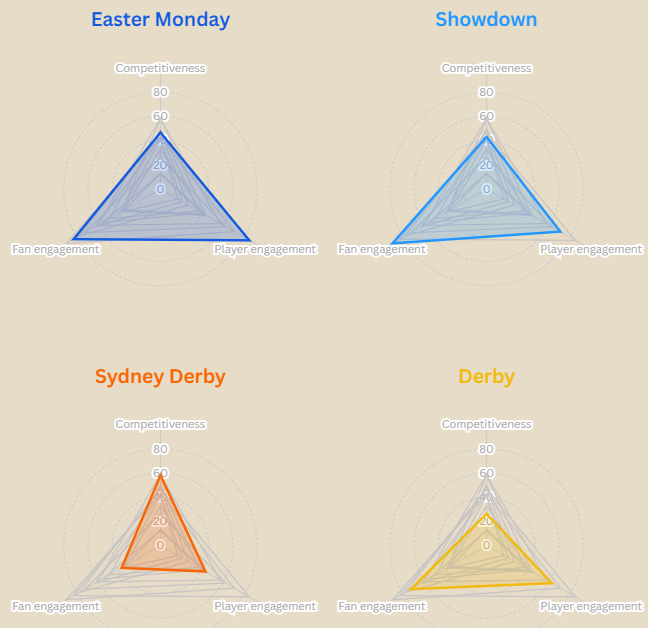

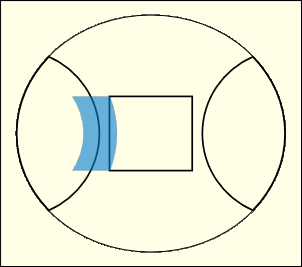

Now that we know what they’re worth, let’s look at how good teams are at generating them. There are some limitations on the data I have – one being that I don’t know who the opposing two rucks are – only the ones that record a stat (Ruck hard ball get, hit out, getting or conceding a ruck free). Because of that we have to look at these stats team-wide rather than individually.

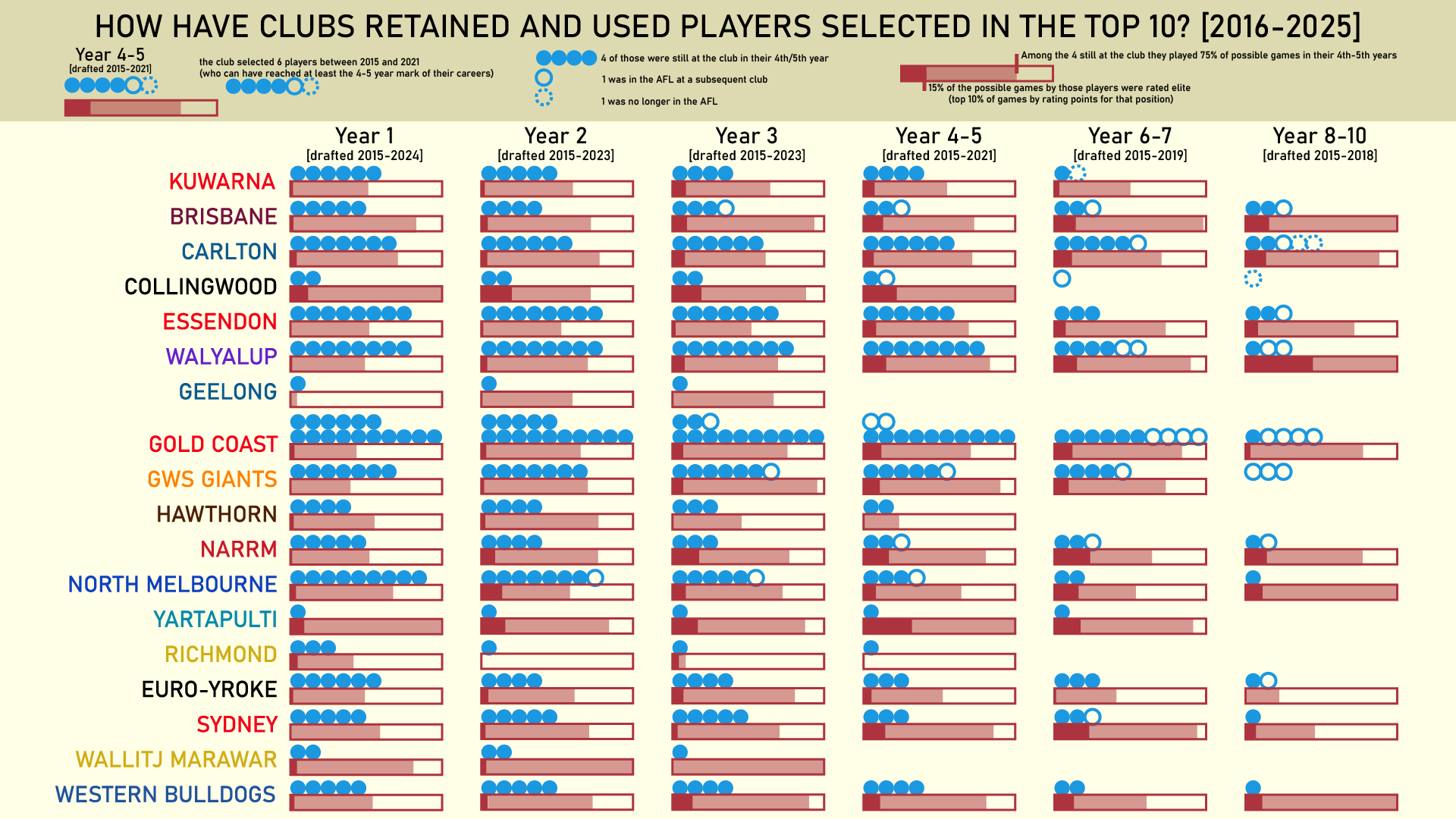

Melbourne, North Melbourne, Sydney, and Carlton are the best at generating positive outcomes – each getting a Ruck Hard Ball Get or better from at least 18.4% of their ruck contests compared to an AFL average of 15.4%.

Looking at the other end, West Coast, Essendon, St Kilda, and the Giants all give up good starting position relatively regularly. Looking at the differentials (% of contests gaining Ruck Hard Ball Get or better minus % of contests conceding the same), Melbourne and North Melbourne are clearly in front at +6%.

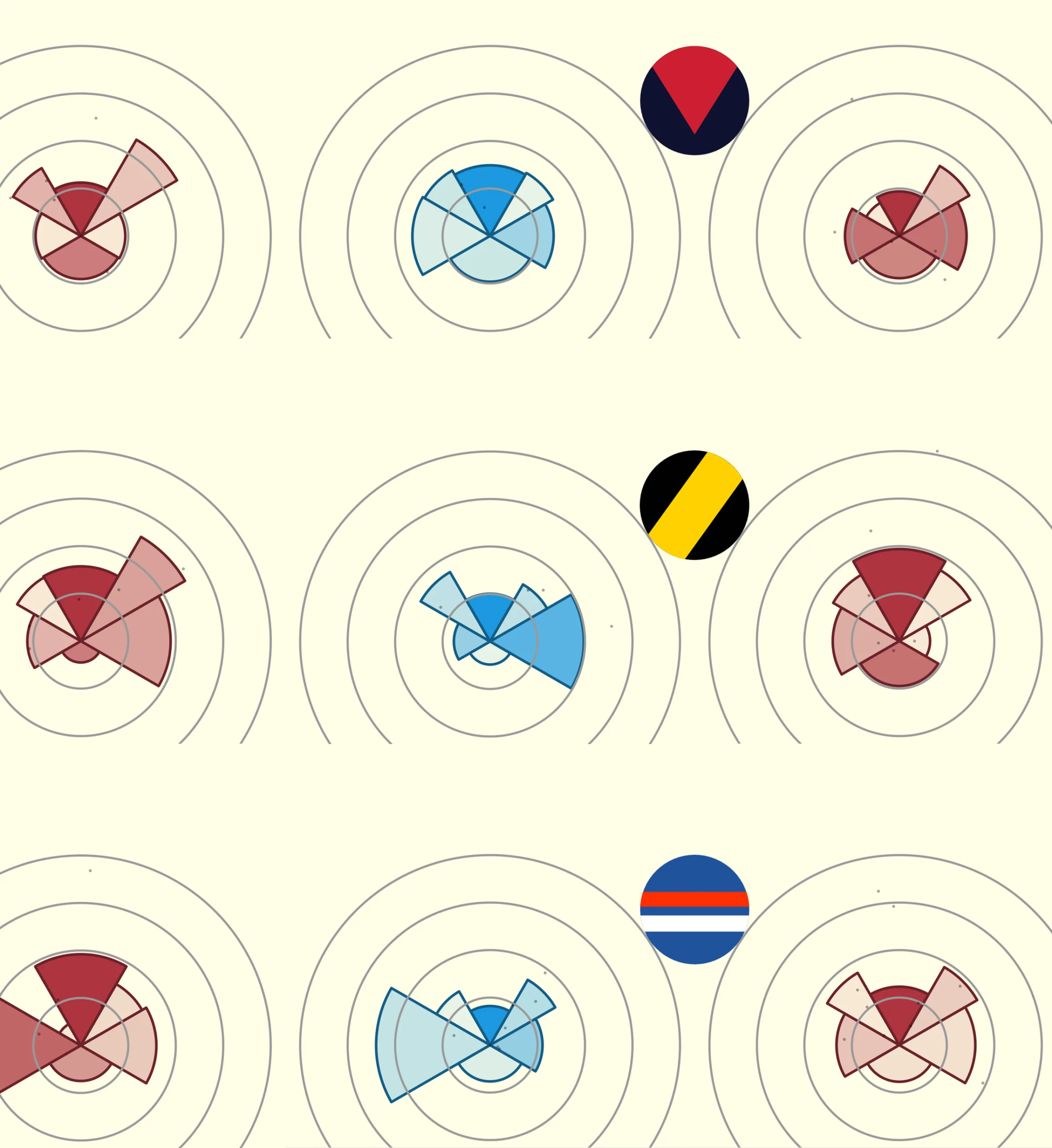

Now, ruck frees aren’t that common, occurring about one in every 36 contests. However, they are impactful – as we discovered earlier 20% of them lead to a scoring chance – so they do warrant a further look.

Since 2021 the best players at generating more ruck frees than they give away are Ben McEvoy and Sam Hayes. In the opposite direction, Stefan Martin is the only player to break the -1 free per hundred contests barrier.

For a bit of fun let’s wind up with the head to head ruck free kick counts for the 15 rucks with the most hitouts since 2025.

The thing that jumps out to me here is just how hard Jarrod Witts is to ruck against. A lot of ruck frees seem to come when an experienced ruckman is up against a pinch hitter. Witts is posting big numbers against the elite rucks of the competition with only Darcy Cameron (5-1) and Oscar McInerney (2-1) getting the better of him.Finally, numbers can only tell us so much. I highly recommend Jeff White’s youtube channel (https://www.youtube.com/@First_Use/videos). It contains a lot of video analysis, but with a particular focus on ruck contests and stoppage play.