Sometimes it’s fun to just light some fires. What better way to do that than to attempt to rank the marquee games in the AFL fixture (and before that, annoy people just by deciding which games get included in the rankings to begin with).

My list of marquee games is as follows:

-

Round One – Carlton v Richmond

-

Easter Thursday – Brisbane v Collingwood

-

Good Friday – North vs Miscellaneous

-

Easter Monday – Geelong v Hawthorn

-

ANZAC Eve – Melbourne v Richmond

-

ANZAC Day – Collingwood v Essendon

-

King’s Birthday – Collingwood v Melbourne

-

Dreamtime At The ‘G – Essendon v Richmond

-

The Derby – Fremantle vs West Coast

-

The Q Clash – Brisbane v Gold Coast

-

The Showdown – Adelaide v Port Adelaide

-

The Sydney Derby – GWS v Sydney

I’m going to be rating them across several criteria based on the most recent 10 occurrences:

-

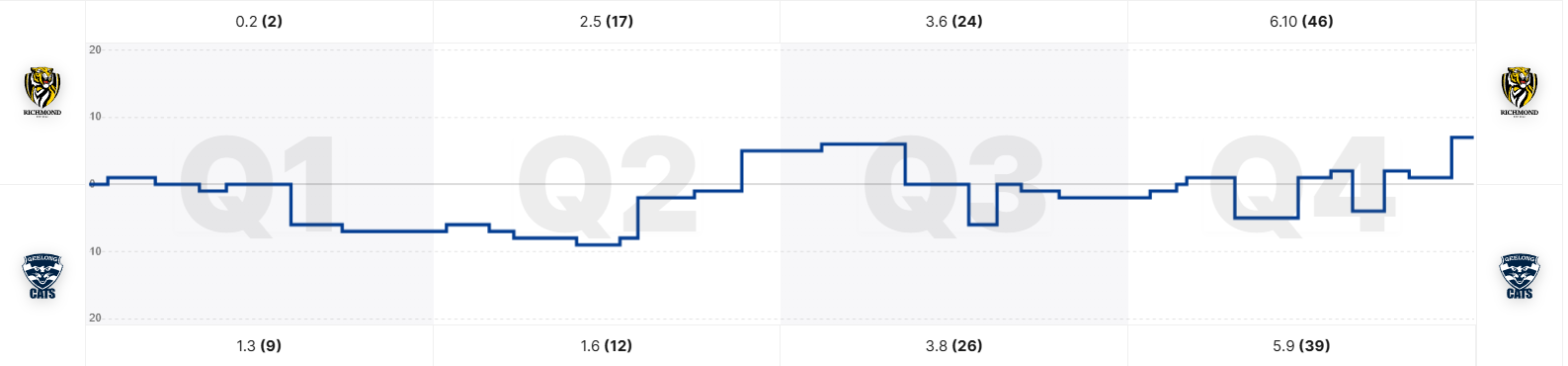

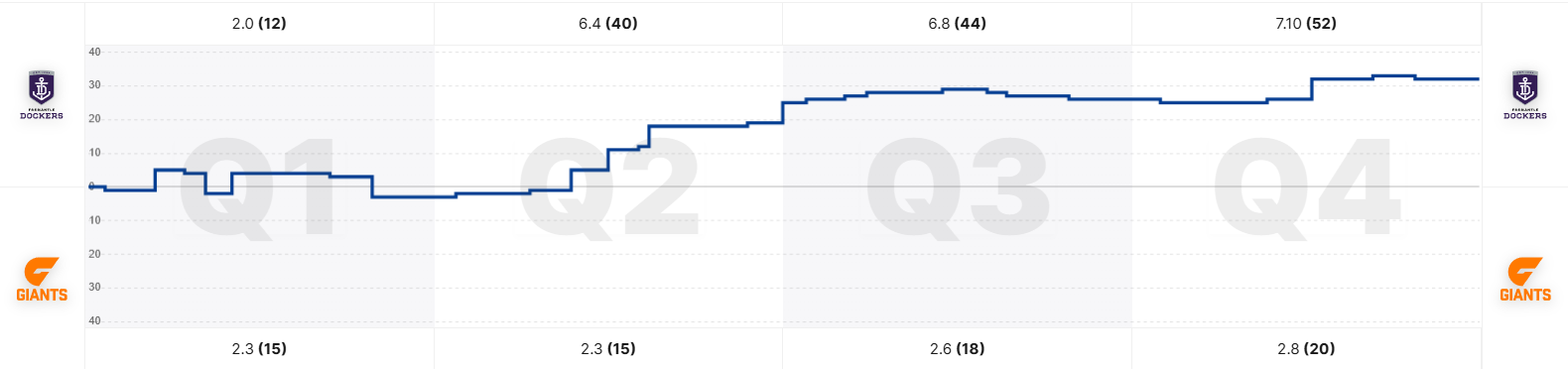

50% Competitiveness

-

Evenness of win-loss record

-

Mean margin (absolute, not team-specific)

-

Proportion of games decided by under two goals

-

-

25% Player engagement

-

MRO sanctions resulting from matches

-

Frees Against

-

-

25% Fan engagement

-

Attendance (Both raw and as a proportion of capacity)

-

Perceived rivalry/dislike

-

So, let’s get into it

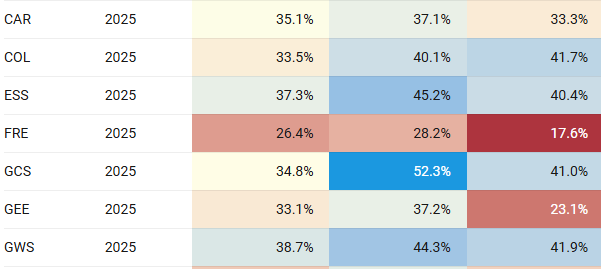

Competitiveness (50% of total)

Win-Loss Record (15% of total)

A pretty simple one here, we want to look at the team with the winning win-loss record and see how far above 50% that record is.

So let’s start off with that, proportion of games won by the series winner, and subtract 50%. A 50-50 win-loss split will yield 0, a 10-0 sweep will yield 0.5

Let’s multiply those results by 30, and then subtract the result from 15.

That will mean a perfectly even split will get the full 15 points on offer, while a sweep will get zero.

Mean margin (15% of total)

The highest mean margin we’ve got is just under 60 points, so we’ll set our baseline there. I think it’s reasonable that if the average match in the slot is a 10 goal blowout you don’t get any points here.

Based on that we take a quarter of the average margin and subtract that from 15 to get our points. Here we are looking at the margin regardless of who won – a match where one team is 10-0 but the average margin is 20 points will still score relatively well in this category.

Proportion of close games (20% of total)

This does result in a little bit of double-counting with the previous measure, but I think the concept of a two goal margin being a close game is well enough established that it warrants consideration.

Take the percentage of games decided by 2 goals or less and double it to get our points.

Player engagement (25% of total)

MRO sanctions (12.5% of total)

Look, I don’t want to glorify violence. Personally I think the league should take a much tougher stance on things like intentional strikes, regardless of impact. That being said, I think the sanctions handed out during a game can provide some degree of insight into the dislike two teams feel for each other.

For this, I’ve gone back through to the start of 2023 looking at each MRO report. Prior to that I didn’t have any confidence that I was able to surface every match, so I’ve drawn the line there.

Where a suspension has been recommended by the MRO I’ve graded it a number of points based equal to the weeks suspended. Where a financial sanction has been recommended I’ve awarded that as half a point. I’ve also excluded umpire contact from the sanctions in this criteria as I don’t feel they get to what we’re after.

Taking the average points over the matches in the sample period gave a maximum of just under 3, so we multiply the result by 5 to get our points here.

Frees Against (12.5% of total)

Sanction by the MRO is a pretty high bar to clear, so I also wanted to look at a marker for lower-level ill-will and bad blood.

To do that I looked at the total frees against by each team, and compared that to that team’s average for that season. For 9 of the 12 games there was an increase, and the range of change went from -12% to +24%.

We’ll add 25% to it (producing a figure of up to 50%) and then halve it to give us our 12.5 points.

Fan engagement (25% of total)

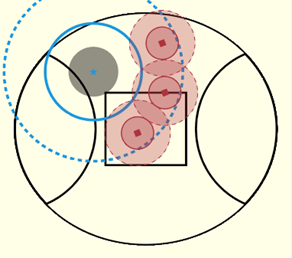

Attendance (12.5% of total)

Crowd absolutely has to come into this, and honestly I may be undervaluing it in the ratings a fair bit. I want to recognise both the sheer number of people, and the degree to which a stadium is packed out.

I’ve taken the average crowd over the last 10 matches (excluding games impacted by COVID) as well as the average proportion of stadium capacity filled. I don’t want this to just be whichever match happens to happen at the MCG being on top, so I’ve weighted the proportion of capacity more heavily than the raw crowd figures.

I’ve taken the average crowd divided by 10,000 and then multiplied that by 1.25 – giving a maximum of 12.5 for an average 100,000 crowd.

I’ve then taken the average percentage of capacity filled and divided it by 10 (giving a number from 0-10) and multiplied that by 3.75 (a number from 0-37.5). That gives us a total of 50 points, which we’ll divide by 4 to fit into our 12.5.

Perceived rivalry

The guys over at The Back Pocket have very kindly given me a sneak peek at their 2025 fan rivalry survey. The survey asks which team you support, and which three teams you dislike (not in a specific order).

I’ve used that to create a list of the three most disliked teams for each supporter base and given 3, 2, 1, or 0 points depending on position. Add that to the reverse and we’ve got a rating from 0-6 which we’ll then scale up to between 0 and 12.5.

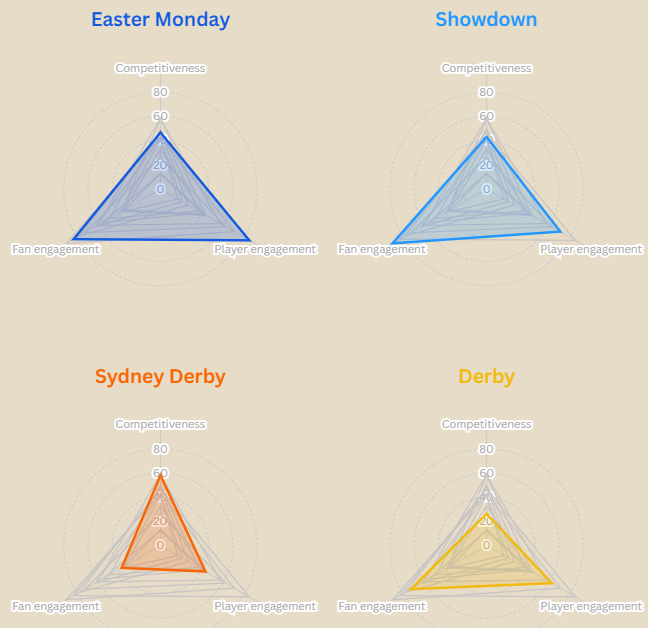

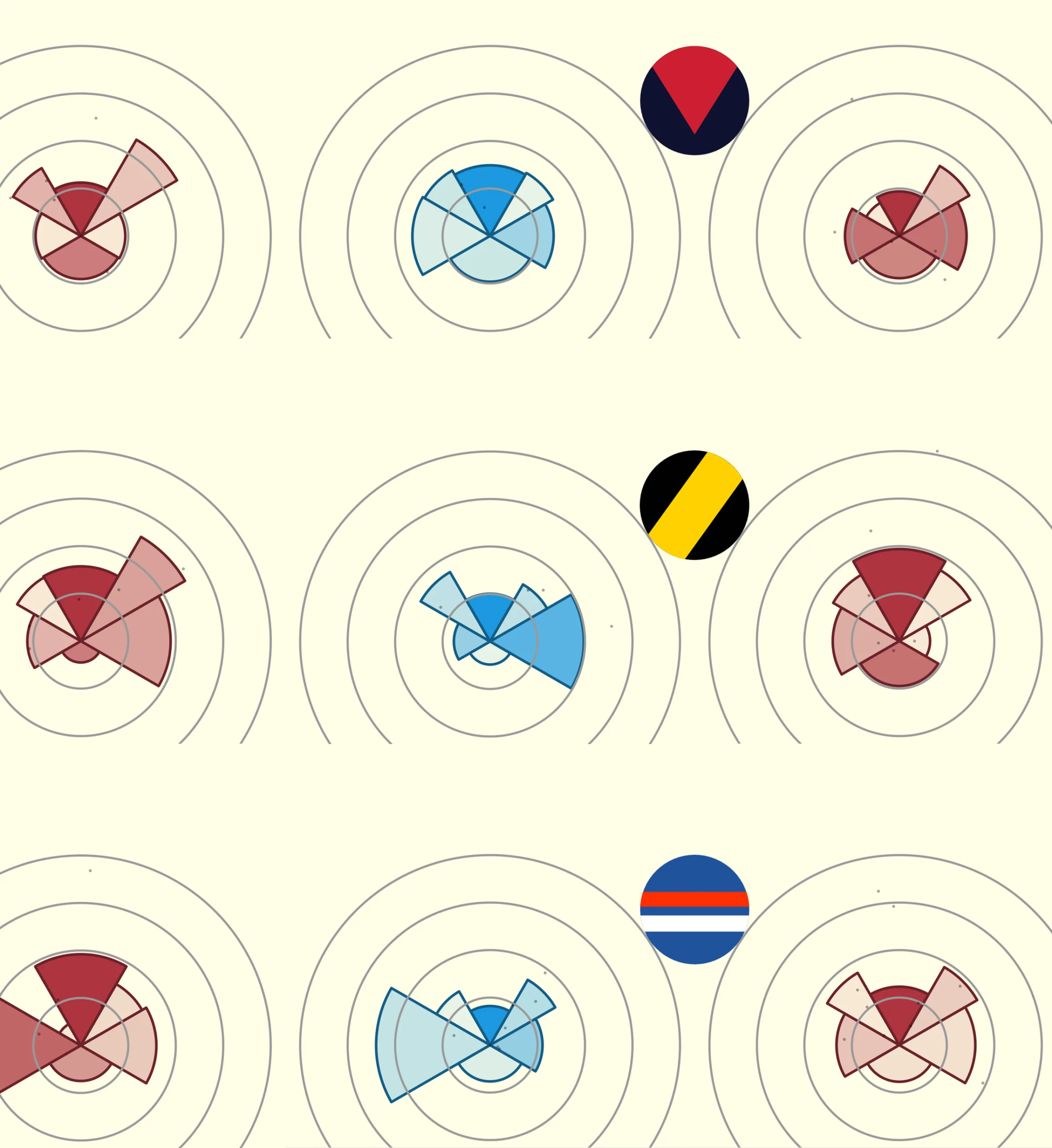

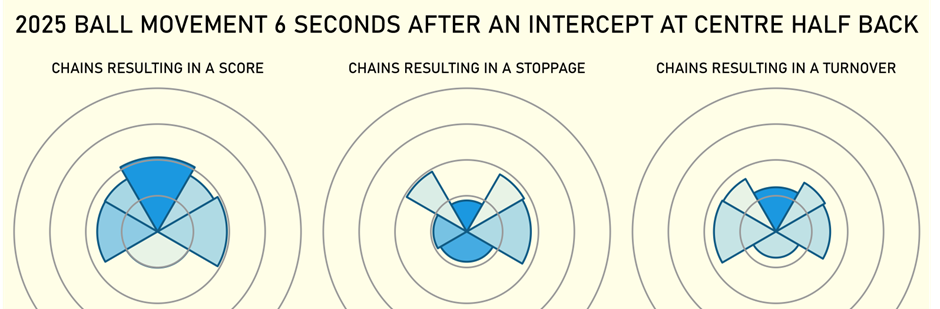

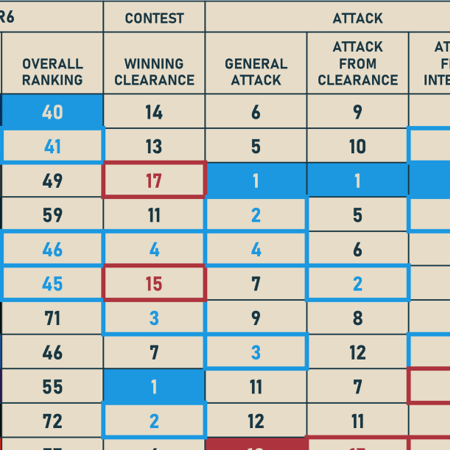

Combining those elements gives us the following ratings out of 100, and our final rankings.

Overall I’m pretty happy with these rankings – it matches up fairly closely to my interest level in watching the various fixtures from a neutral perspective.

To further visualize where each match-up is assessed as stronger, this radar chart converts each component to a rating out of 100.

There you go, an objective and scientific approach to ranking the marquee games. Feel free to come yell at me on bluesky about how you disagree.